INTRODUCTION

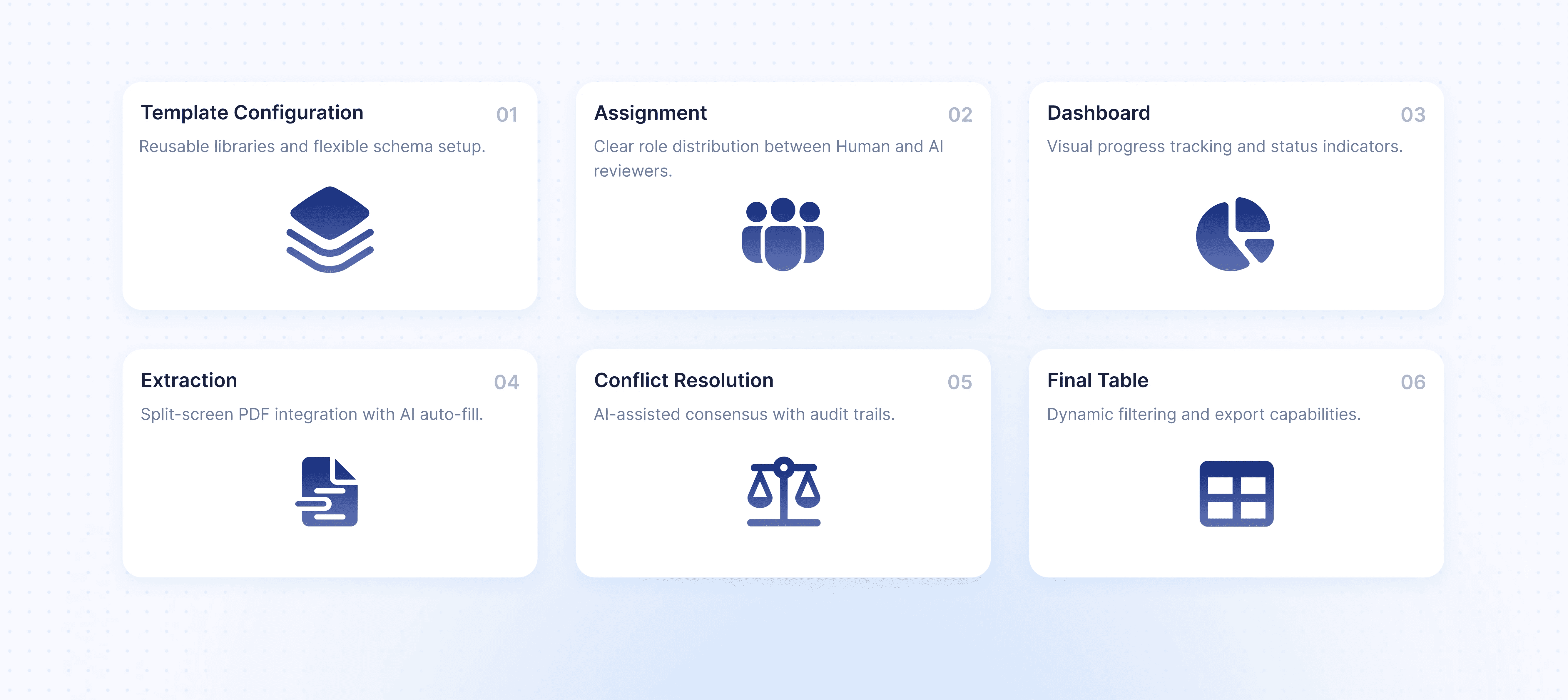

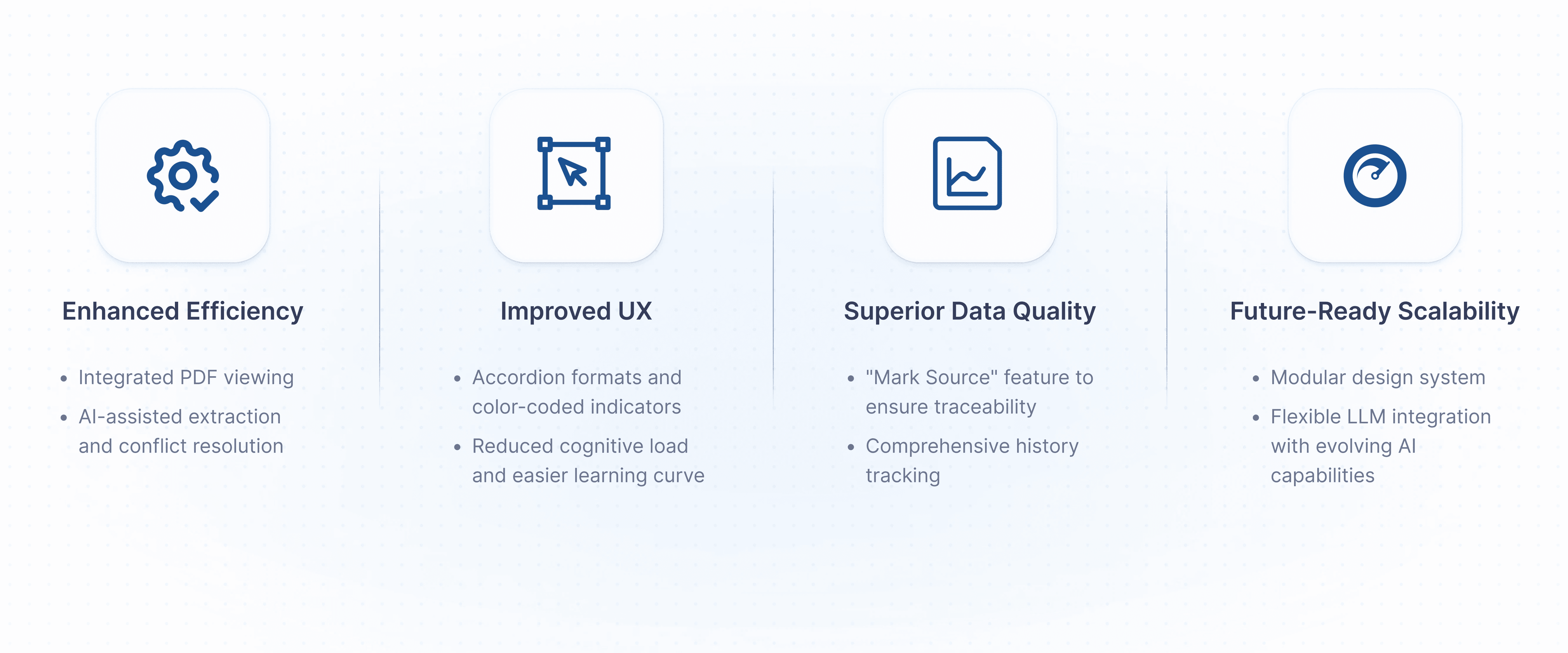

A modern Human–AI collaborative platform designed to streamline data extraction for Living Systematic Reviews. Working with Mayo Clinic subject matter experts, we redesigned the LSR workflow to reduce cognitive load, integrate transparent AI assistance, and support flexible human-only, hybrid, and AI-assisted review models. The result is a scalable, research-ready interface that accelerates evidence synthesis while maintaining clinical rigor.

MY ROLE

UX Designer (Research, Workflow Design, Human–AI Interaction, Prototyping)

TIMELINE

12 weeks

THE TEAM

Mayo Clinic SMEs, 5 UX Course students

TOOLS USED

Figma, Miro, LLMs, PDF analysis tools

THE CHALLENGE

Living Systematic Reviews are designed to keep medical evidence continuously up to date, yet the workflows that support them remain slow, fragmented, and cognitively demanding. Reviewers must manually extract and verify data across thousands of PDFs using disconnected tools, leading to fatigue, delays, and increased risk of error. While AI has the potential to accelerate this process, existing solutions lack the transparency and traceability required for clinical trust. These challenges are not merely operational inefficiencies, they directly impact how quickly validated evidence reaches clinical practice.

Why this matters?: In healthcare and research settings, speed without trust is unusable. If reviewers cannot clearly verify where data comes from or how it was generated, AI-assisted workflows fail to gain adoption, regardless of efficiency gains.

WHAT I SET OUT TO LEARN

Rather than jumping to interface solutions, we framed our work around three key questions:

"Where does cognitive load peak during the extraction workflow?"

"What prevents reviewers from trusting AI-assisted tools?"

"How can Human–AI collaboration remain flexible without disrupting existing mental models?"

RESEARCH & EVALUATION

Methods Used were Co-design with SMEs, Domain constraints and Trust requirements.

CONCEPT EXPLORATOIN

DESIGN STRATEGY

IMPACT SUMMARY

FUTURE STEPS

LEARNINGS

This project taught me how to design for trust in high-stakes environments, approach AI as a UX challenge rather than a technical one, and think in systems instead of screens. Collaborating with domain experts and working within real-world constraints strengthened my ability to design thoughtful, scalable workflows that balance efficiency with accountability.